MCP vs. Traditional API Integration: Why Your AI Agent Architecture Needs to Change

MCP vs traditional API integration for AI agents. Comparison table, real cost numbers, and a practical migration path for enterprise teams.

Chase Dillingham

Founder & CEO, TrainMyAgent

You’ve got 15 custom API integrations powering your AI agent. Each one has its own auth flow, error handling, retry logic, and data transformation layer.

Now your team wants to add a new LLM provider.

That’s 15 integrations you need to rebuild. Or at least refactor. Again.

This is what happens when you build AI agent infrastructure on raw API calls. It works until it doesn’t. And “doesn’t” usually arrives the week your board wants a demo.

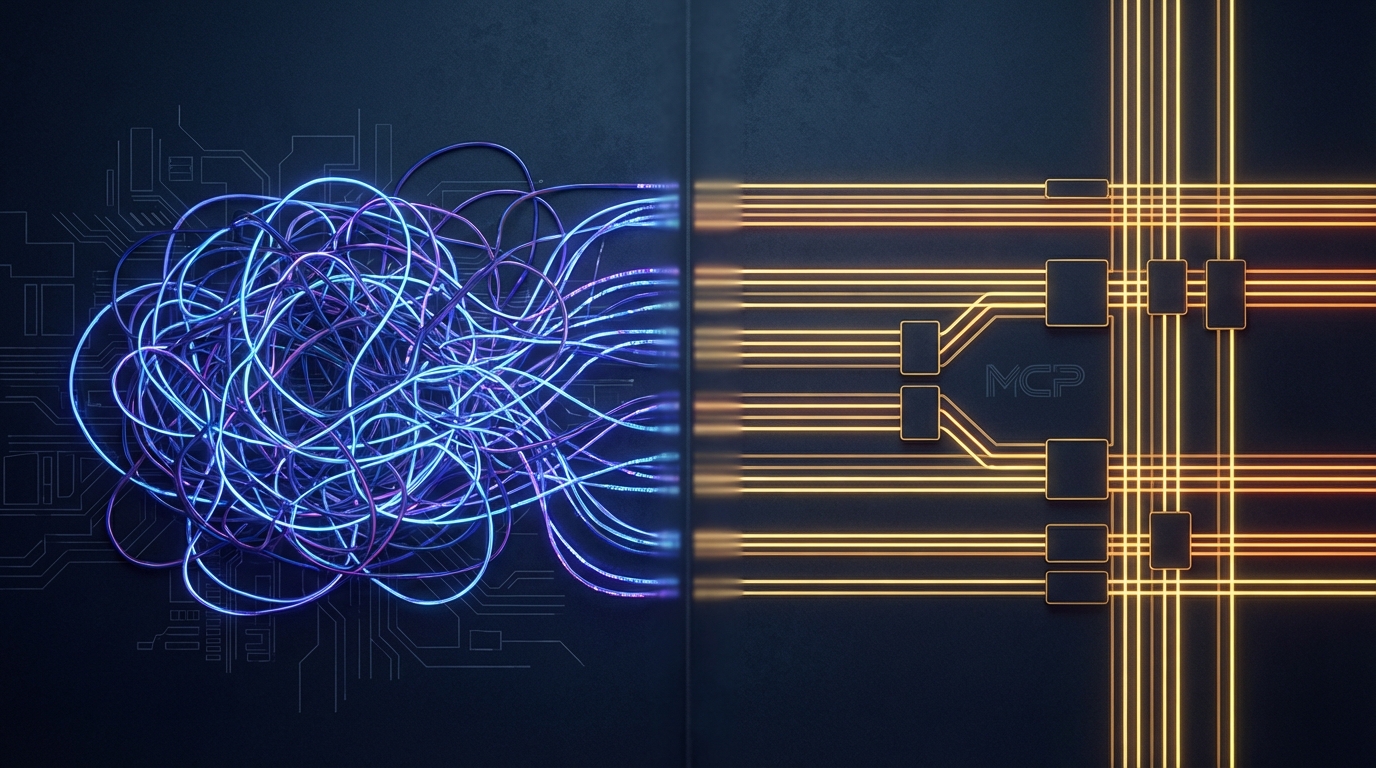

MCP (Model Context Protocol) replaces custom API-to-model plumbing with a single standardized protocol. One MCP server per tool. One MCP client per model. Everything talks to everything.

Here’s exactly how MCP compares to traditional API integration, when to use which, and how to migrate without blowing up your production systems.

The Traditional API Integration Model

Every enterprise team building AI agents has lived this:

- You pick an LLM (say, Claude)

- You identify the tools it needs (CRM, database, ticketing system, file storage)

- For each tool, you build a custom integration:

- REST client with auth (OAuth, API keys, tokens)

- Request/response mapping to the LLM’s tool calling format

- Error handling and retry logic

- Rate limiting

- Data transformation (the tool’s schema to something the model understands)

- You hard-wire these into your agent’s codebase

- You maintain all of them. Forever.

This approach has worked. Millions of AI applications run on direct API integrations today. But the cost compounds.

Real numbers from enterprise deployments we’ve seen:

- Integration development: 2-4 weeks per tool, per model (source)

- Ongoing maintenance: 15-25% of initial build cost annually per integration (source)

- Model migration cost: Near-total rebuild when switching LLM providers

5 tools across 2 models = 10 integrations. At 3 weeks each, that’s 30 weeks of engineering time before your agent does anything useful. Then 15-25% of that annually to keep the lights on.

That’s not scalable. That’s a maintenance trap.

How MCP Changes the Architecture

MCP flips the model.

Instead of building point-to-point integrations between every model and every tool, you build:

- One MCP server per tool (exposes the tool’s capabilities via the MCP protocol)

- One MCP client per model (already built into Claude, ChatGPT, Gemini, Copilot)

The MCP server for your Salesforce instance works with every MCP-compatible model. The MCP client in Claude works with every MCP server. No rebuilding when you add a model. No rebuilding when you add a tool.

97 million monthly SDK downloads as of February 2026 (source). This is the direction the industry locked in.

Head-to-Head Comparison

| Dimension | Traditional API Integration | MCP |

|---|---|---|

| Integration per tool | Custom per model + tool pair | One MCP server per tool, works with all models |

| Discovery | Hard-coded; agent must know tools in advance | Dynamic; agent discovers available tools at runtime |

| Auth model | Custom per integration | Standardized (with enterprise features on 2026 roadmap) |

| Schema definition | OpenAPI/Swagger, manually mapped to LLM format | Built into protocol; tools self-describe parameters and return types |

| Transport | HTTP/REST, GraphQL, gRPC | JSON-RPC over stdio, SSE, or Streamable HTTP |

| Model switching cost | High — rebuild integrations for new model’s format | Zero — MCP servers are model-agnostic |

| Composability | Low — each integration is standalone | High — agents compose tools from multiple servers |

| Maintenance burden | Per integration | Per server (shared across all models) |

| Community ecosystem | Per-vendor SDKs | Thousands of shared MCP servers (source) |

| Maturity | Decades of tooling | ~18 months, rapidly maturing |

Where MCP Wins

1. Tool Discovery

With traditional APIs, your agent knows what tools exist because you hard-coded them. Add a new tool? Update the agent code, redeploy.

MCP servers advertise their capabilities dynamically (source). Your agent connects to a server and asks “what can you do?” The server responds with its full tool catalog, parameter schemas, and descriptions. The model decides which tools to use based on the user’s request.

This is the difference between a static menu and a living toolkit.

2. Standardized Tool Descriptions

Every LLM provider has a slightly different format for describing tools. OpenAI uses function definitions. Anthropic uses tool specifications. Google has its own format.

MCP standardizes this. You describe your tool once in the MCP server. Every MCP client translates it to the model’s native format automatically. Write once, works everywhere.

3. Composability

Traditional integrations are monolithic. Your Salesforce integration, your PostgreSQL integration, your Slack integration — they’re separate codebases that don’t know about each other.

MCP servers are composable by design. An agent orchestration layer can connect to 10 MCP servers simultaneously. The model can chain tool calls across servers in a single conversation: query the database, create a Salesforce record from the results, then post a summary to Slack.

This is how agentic workflows actually work in production.

4. Vendor Independence

Locked into OpenAI’s function calling format? Switching to Claude means rewriting every integration.

MCP is open source (Apache 2.0). Your MCP servers work with any compliant client. Switch models, add models, mix models — your tool layer doesn’t change (source).

5. Faster Development

Building a basic MCP server takes hours, not weeks. The Python SDK (FastMCP) lets you expose a tool in under 20 lines of code (source). Compare that to building a full REST integration with auth, error handling, and model-specific formatting.

Our team has gone from “idea to working MCP server” in under an hour for standard integrations. Try that with a custom API connector.

Where Traditional APIs Still Win

Let me be honest. MCP doesn’t replace APIs everywhere.

High-Performance, High-Volume Data Pipelines

If you’re streaming millions of events per second through Kafka, MCP isn’t the right transport. MCP is designed for model-to-tool communication, not bulk data movement. Keep your ETL pipelines on traditional infrastructure.

Non-AI System-to-System Integration

MCP is specifically designed for AI model interactions. If two backend services need to talk to each other with no AI in the loop, REST/gRPC/GraphQL is still the right call. Don’t force MCP into places where a simple HTTP call works fine.

Mature, Stable Integrations That Don’t Need AI Access

If you’ve got a battle-tested Stripe integration that processes payments and it works flawlessly, don’t rewrite it as an MCP server unless you need an AI agent to interact with it directly.

Latency-Critical Paths

MCP adds a protocol layer. For sub-millisecond response requirements, direct API calls with optimized clients will outperform MCP’s JSON-RPC overhead. In practice, this matters less than you’d think for AI workloads (LLM inference already takes seconds), but it’s worth noting.

The Migration Path

You don’t rip out your existing integrations overnight. Here’s how enterprise teams are migrating:

Phase 1: Wrap Existing APIs

Build MCP servers that wrap your existing API integrations. Your REST client for Salesforce becomes the backend of a Salesforce MCP server. The server exposes tool calling endpoints via MCP. Your existing code still runs underneath.

Timeline: 1-2 days per integration for simple wrappers.

Phase 2: New Integrations on MCP-First

Any new tool integration starts as an MCP server. No more custom API connectors for AI use cases. This stops the bleeding immediately.

Phase 3: Consolidate Duplicate Logic

Once you have MCP servers wrapping existing integrations, you’ll notice duplicate patterns: auth flows, error handling, retry logic. Consolidate these into shared MCP server middleware.

Phase 4: Retire Legacy Connectors

As your MCP layer proves stable, retire the old custom integration code. The MCP server becomes the canonical way your AI agents interact with each tool.

Total migration timeline for a typical enterprise: 2-4 months to full MCP coverage, running in parallel with existing integrations the entire time. No big bang. No downtime.

The Real Cost Comparison

Let’s do the math on a typical enterprise with 3 AI models and 8 tools.

Traditional API Integration:

- 3 models x 8 tools = 24 integrations

- 3 weeks average build time each = 72 weeks of engineering

- Annual maintenance at 20% = 14.4 weeks/year

- Model migration (adding 1 new model) = 8 new integrations = 24 weeks

MCP Architecture:

- 8 MCP servers + 3 MCP clients (pre-built) = 8 components

- 1 week average build time each = 8 weeks of engineering

- Annual maintenance at 20% = 1.6 weeks/year

- Model migration (adding 1 new model) = 0 new MCP servers = 0 weeks

Year 1 savings: 64 weeks of engineering time. Year 2+ savings: ~13 weeks annually in reduced maintenance, plus zero cost for model additions.

Those aren’t theoretical numbers. That’s the structural advantage of N + M over N x M.

How TMA Approaches This

We build production AI agents with MCP as the default integration layer. Every pilot we ship uses MCP servers for tool access. When a client’s infrastructure requires direct API integration for specific use cases, we build that too. But MCP is the starting point, not the afterthought.

The result: pilots ship faster, agents are more composable, and clients aren’t locked into any single model provider.

Frequently Asked Questions

Can MCP servers wrap existing REST APIs?

Yes. The most common MCP migration pattern is building MCP servers that wrap existing API clients. Your current REST integration becomes the backend of an MCP server. The AI model interacts through MCP while your proven API code still runs underneath.

Does MCP replace OpenAPI/Swagger?

No. OpenAPI describes REST APIs for developers. MCP describes tools for AI models. They serve different audiences. You can use OpenAPI-documented APIs as the backend for MCP servers.

Is MCP slower than direct API calls?

MCP adds a thin JSON-RPC protocol layer. For AI workloads where LLM inference takes 1-30 seconds, the protocol overhead (milliseconds) is negligible. For sub-millisecond system-to-system calls, direct APIs are more appropriate.

Do I need to rewrite my agent to use MCP?

If your agent framework supports MCP clients (most do now), you configure MCP servers rather than rewriting. The switch is typically configuration, not code.

What happens if MCP adoption stalls?

MCP is open source with 97M monthly SDK downloads and backing from every major AI provider. But even in a worst case, MCP servers are thin wrappers — the underlying API integration logic is reusable regardless.

Can MCP handle authentication and authorization?

MCP supports authentication today, with enterprise-grade features (SSO integration, gateway controls) on the 2026 roadmap. For production deployments now, teams implement auth at the MCP server level using their existing identity infrastructure.

How does MCP handle errors from underlying APIs?

MCP has a standardized error response format. Your MCP server catches API errors and translates them into MCP error responses that the model can understand and act on, rather than crashing the conversation.

Three Ways to Work With TMA

Need an agent built? We deploy production AI agents in your infrastructure. Working pilot. Real data. Measurable ROI. → Schedule Demo

Want to co-build a product? We’re not a dev agency. We’re co-builders. Shared cost. Shared upside. → Partner with Us

Want to join the Guild? Ship pilots, earn bounties, share profit. Community + equity + path to exit. → Become an AI Architect

Need this implemented?

We design and deploy enterprise AI agents in your environment with measurable ROI and production guardrails.

About the Author

Chase Dillingham

Founder & CEO, TrainMyAgent

Chase Dillingham builds AI agent platforms that deliver measurable ROI. Former enterprise architect with 15+ years deploying production systems.